Building long-horizon robot agents requires composing closed-loop pipelines (perception, belief, planning, and control) whose components run at different clocks and with variable latency. Retriever is a programming model and runtime that makes timing and input-consumption semantics explicit by representing an agent as a graph of stateful causal stream functions executed on explicit run clocks and connected by edgewise synchronization policies. The runtime logs the input snapshot consumed at each step, enabling deterministic replay and systematic debugging across execution backends.

Problem: What breaks in practice

Modern robot systems are typically assembled as closed-loop, modular pipelines in which slow semantic reasoning runs alongside medium-rate skill execution and high-rate feedback control. However, implementing such pipelines is brittle in today’s stacks: blocking on slow model calls can stall real-time control, while ad-hoc non-blocking access yields stale decisions and schedule-dependent behavior that is difficult to debug or replay.

Key Idea: Make time and sampling explicit at module boundaries

Retriever addresses this mismatch by making temporal coordination explicit and composable in the program itself rather than an implicit implementation detail hidden in glue code. Each module declares a run clock (periodic or event-triggered), and each edge declares a deterministic synchronization policy that defines which upstream history is consumed at every downstream tick. During execution, the runtime records the consumed input IDs for each step so the same graph can be replayed deterministically from logged asynchronous data.

Figure-First Model Explanation

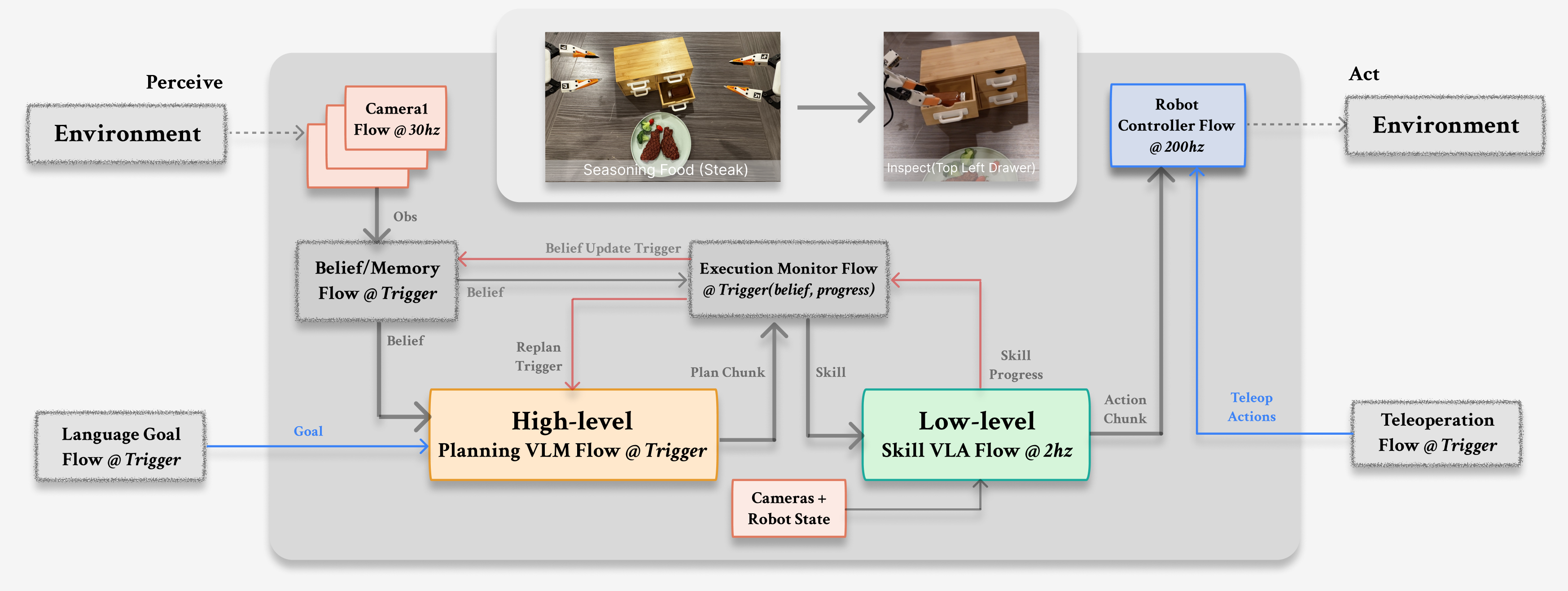

Canonical multi-rate closed-loop pipeline

This canonical multi-rate closed-loop agent combines slow VLM planning (with belief and memory), execution monitoring, medium-rate skill execution via a VLA, and high-rate control.

The pipeline illustrates a common multi-rate closed loop in which slow planning overlaps with medium-rate skill execution and high-rate control. A representative configuration has the following typical rates:

- Camera and robot-state streams typically arrive at around 30Hz and provide the high-bandwidth observations needed by the rest of the system.

- The planner runs on triggers or a low-rate clock, because high-level reasoning is comparatively slow and often event-driven.

- The skill policy executes at roughly 2-3Hz, where it can incorporate synchronized context and emit short action chunks.

- The controller consumes those chunks and closes the loop at roughly 200Hz, preserving the fast timing needed for control.

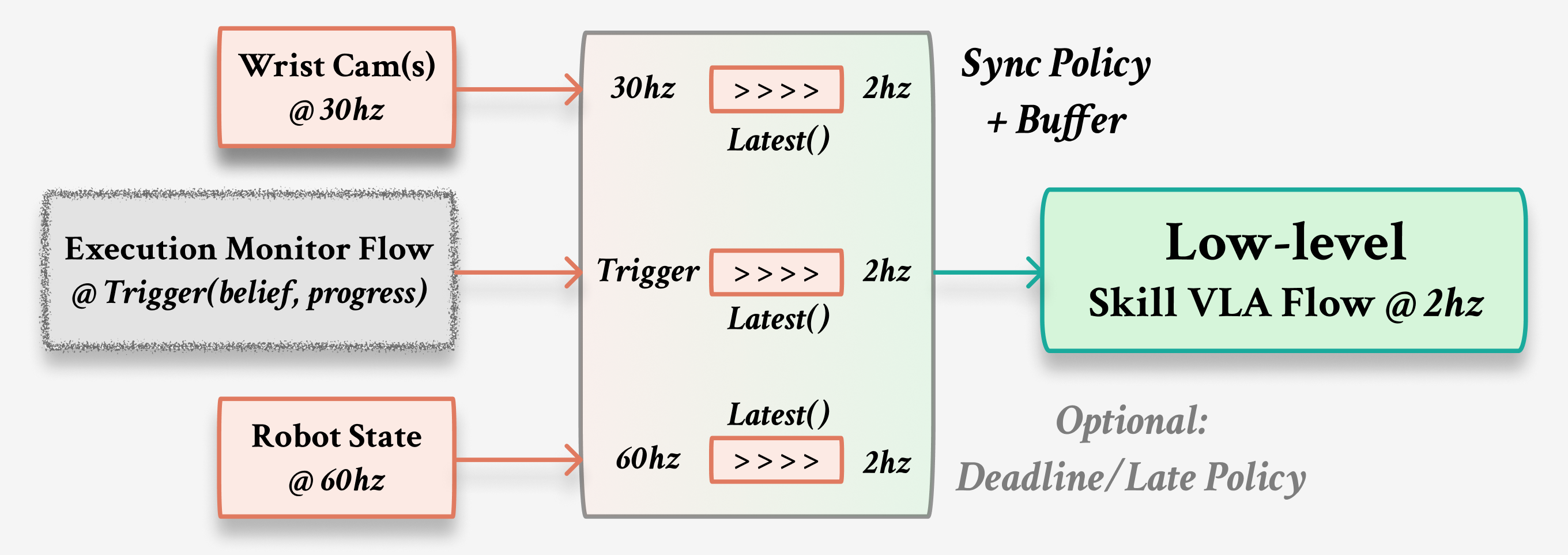

Synchronization policies

Explicit edge synchronization replaces implicit “latest message” behavior with deterministic snapshot rules such as Latest(staleness), Window, and Queue.

Operationally, when a downstream flow wakes at a tick, the runtime samples each incoming stream according to the policy attached to that edge, constructs a single aligned input bundle, and then calls the flow’s synchronous step exactly once. For example, Latest(staleness=...) implements sample-and-hold with an explicit bounded-staleness contract, Window(...) gathers a short history for aggregation, and Queue(...) consumes events in FIFO order. Because these snapshot rules are explicit, the program’s meaning is independent of sub-millisecond scheduling jitter.

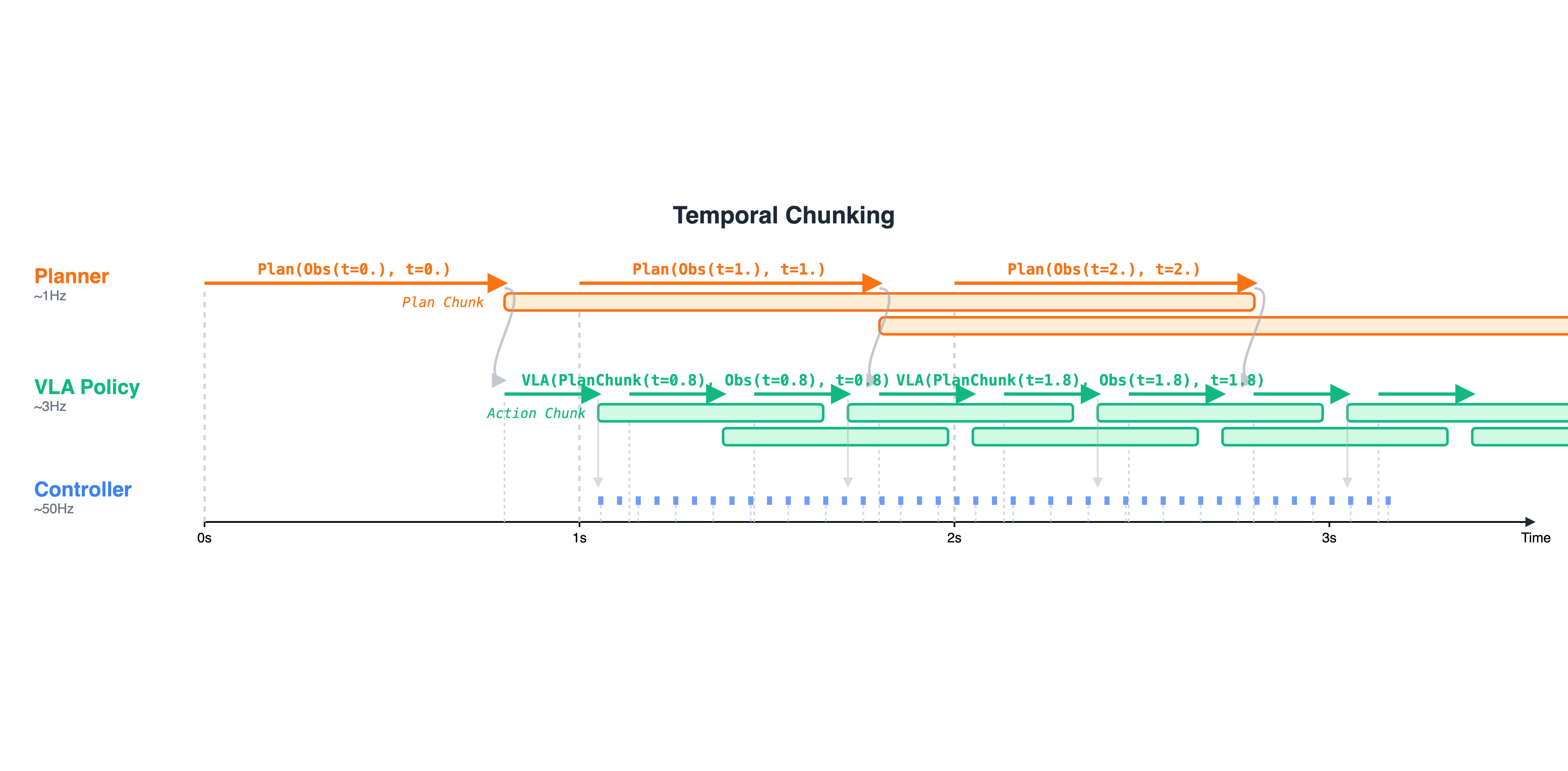

Temporal chunking

Slow producers emit time-extended chunks, and fast consumers reuse the latest valid chunk on their own clock.

Temporal chunking treats outputs as time-extended objects produced asynchronously but consumed on a downstream clock under an explicit reuse or commit rule. In the case-study pipeline, the skill policy emits an ActionChunk so the controller can run at 200Hz even when policy inference is slower. Similarly, the execution monitor buffers plan proposals and commits plan chunks only at safe points, which allows planning to overlap with execution without mid-skill rewrites.

Runtime Contributions

Retriever provides a small set of runtime mechanisms that make this style of programming practical.

At the user level, the typed Flow interface gives each module local state and a synchronous step semantics, so composition remains modular and explicit. Under the hood, Retriever compiles the graph to an intermediate representation that can validate topology, clocks, and synchronization policies before execution, and it can dispatch the same program to different execution backends without changing the user-level definition. Because execution is grounded in event-time semantics and logged input snapshots, the runtime can support deterministic replay across runs.

Example Code

The two core Retriever snippets below show the programming model directly: a VLA flow that consumes a synchronized snapshot, and the main multi-rate pipeline wiring.

VLA flow example

This is the low-level skill flow pattern: at each 2Hz tick, the flow receives an already-aligned input snapshot and emits an ActionChunk. In the case study, the same skill module also reports progress feedback as a normalized score back to the execution monitor.

# Example: a VLA skill flow running at 2Hz.# Inputs: wrist-camera observation, robot state, and the active skill command.# Output: an ActionChunk so policy inference latency is decoupled from fast control.# In Retriever-0, the skill flow also predicts progress p in [0,1] for the monitor.class VLASkillFlow(Flow): def reset(self): # Flow-local state stays inside the module; no hidden global callbacks. self.cur_skill = None

def step(self, inp): # At this tick, Retriever has already applied each incoming edge policy # and constructed one deterministic synchronized snapshot for this step. cam_obs, robot_state, skill_cmd = inp

# The execution monitor decides which skill is currently active. self.cur_skill = skill_cmd

# Emit a short action chunk for a downstream high-rate controller. # Progress feedback is treated as a separate stream to the monitor. return self.vla(cam_obs, robot_state, self.cur_skill)Conceptually, the monitor-side message is Progress = { "skill_id": str, "p": float }, where is a normalized progress prediction.

Case-study pipeline wiring

This wiring shows how planning, monitoring, skill execution, and control are attached to different clocks, with synchronization policies declared edge-by-edge.

# Run clocks make the multi-rate structure explicit.cam = CameraSource(id=0) @Rate(hz=30)belief = BeliefMemoryFlow() @Trigger("inspection_done")planner = VLMPlanFlow("gemini") @Trigger("belief_updated")monitor = ExecMonitorFlow() @Rate(hz=10)vla = VLASkillFlow("pi05") @Rate(hz=2)ctrl = ControllerFlow(id=0) @Rate(hz=200)teleop = TeleopFlow() @Trigger("teleop_event")

with Pipeline("Agent") as pipe: # Sample-and-hold camera input for the 2Hz skill policy, but bound staleness. cam.then(vla, sync=Latest(staleness=0.1)) # Stream short action chunks from the slow policy to the fast controller. vla.then(ctrl, sync=ActionChunking(horizon_ms=50))

# Belief and planning run on their own triggers, not on the control clock. cam.then(belief, sync=Latest()) belief.then(planner, sync=Latest()) planner.then(monitor, sync=Latest()) # asynchronous plan proposals vla.then(monitor, sync=Latest()) # progress feedback p in [0,1] teleop.then(monitor, sync=Latest()) # optional override and logging monitor.then(vla, sync=Latest()) # monitor emits one active skill_cmd at a time

# Plan chunking is handled inside the monitor: proposals may arrive anytime, # but plan commits happen only at safe points to avoid mid-skill rewrites. # Skill switching is separate: the monitor resets progress on each switch # and treats a skill as complete only when p >= 0.9 is sustained for ~0.5s.In this example, run clocks (Rate and Trigger) describe when each module is allowed to step, while edge policies (Latest(...) and ActionChunking(...)) describe what each module consumes at its own tick. The execution monitor buffers plan proposals and commits them only at safe points (plan chunking), and it switches skills using progress feedback (with stale-feedback mitigation via progress resets and a sustained-completion rule such as p >= 0.9 for about 0.5s). The skill flow remains a synchronous state machine; cross-rate coordination lives in the pipeline wiring and runtime semantics rather than in ad hoc callback logic.

Experiments

We evaluate Retriever along three axes: (1) capability on long-horizon manipulation tasks that require coordinating slow reasoning, medium-rate skills, and high-rate control; (2) efficiency, including message transport/runtime overhead and task-level ablations; and (3) usability, including determinism and replay. The figures below highlight representative measurements used in the paper.

To read this section as a running example, start from the pipeline above. The latency benchmark isolates the overhead of moving data between flows. The hybrid-dynamics stress test isolates the effect of event-time versus arrival-time semantics on trace stability. The robot evaluation then exercises the full closed-loop stack (planning, monitoring, skills, and control) under heterogeneous clocks.

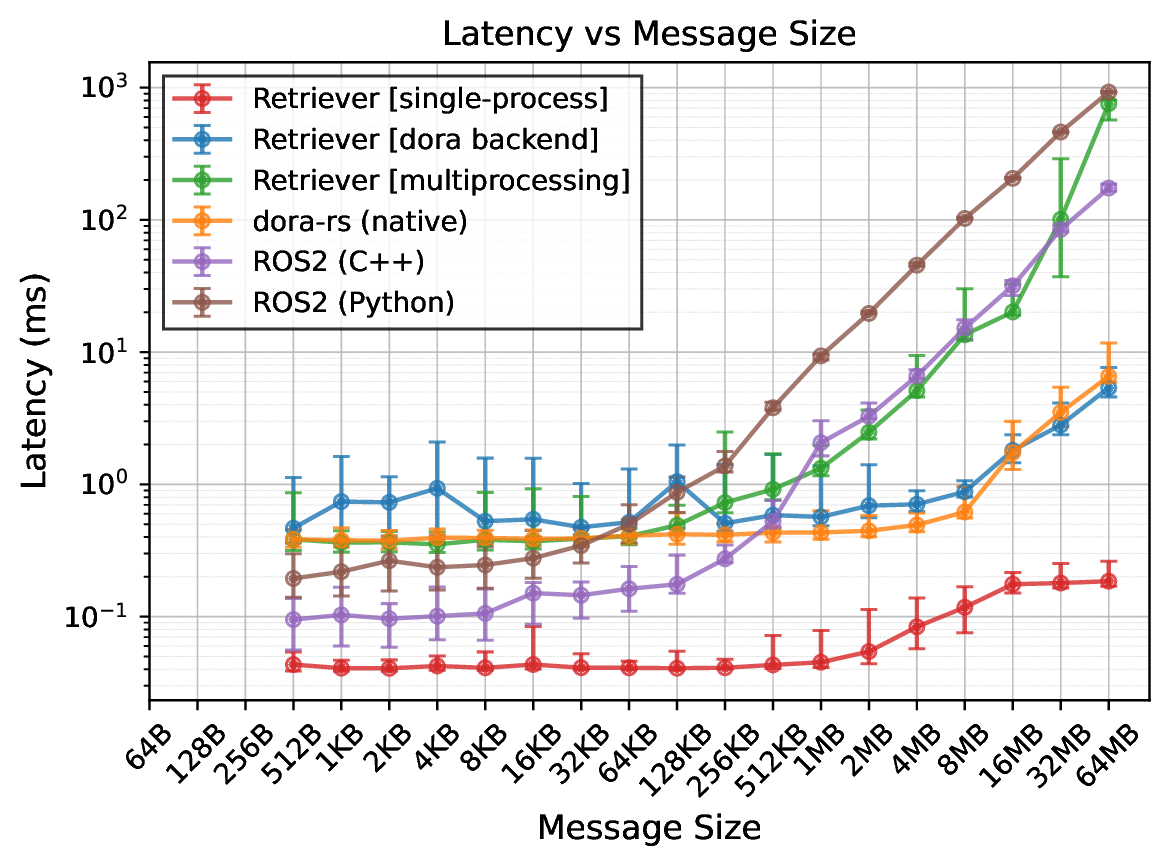

Message latency benchmark. We benchmark end-to-end message latency as a function of payload size to isolate transport and runtime overhead in a minimal producer-consumer setup. In the paper, Retriever with a Dora backend closely tracks native transport baselines across payload sizes, suggesting that the graph IR, clocks, and synchronization policies add little overhead relative to transport.

Concretely, this benchmark uses a minimal two-node pipeline (a producer flow that emits a payload and a consumer flow that timestamps receipt), repeats many trials per payload size, and plots latency on log-log axes. The goal is not to optimize a single microkernel, but to show that making clocks and synchronization explicit does not force a large constant-factor penalty.

End-to-end message latency versus payload size (log-log), used to benchmark transport and runtime overhead in a minimal producer-consumer pair.

Determinism stress test (hybrid dynamics). To stress trace sensitivity under asynchronous execution, we use a hybrid differentiable physics example in which discrete events (impacts) make the realized computation graph path-dependent. This provides a clean setting to compare event-time semantics (Retriever) against arrival-time pub/sub semantics under jitter-induced staleness.

Intuitively, a one-tick difference in when an impact guard triggers changes which operations are executed, so the backward pass differentiates through a different computation graph. We therefore treat this as a stress test for the runtime semantics: if input snapshots are schedule-dependent, traces can diverge across runs even when the initial conditions are identical.

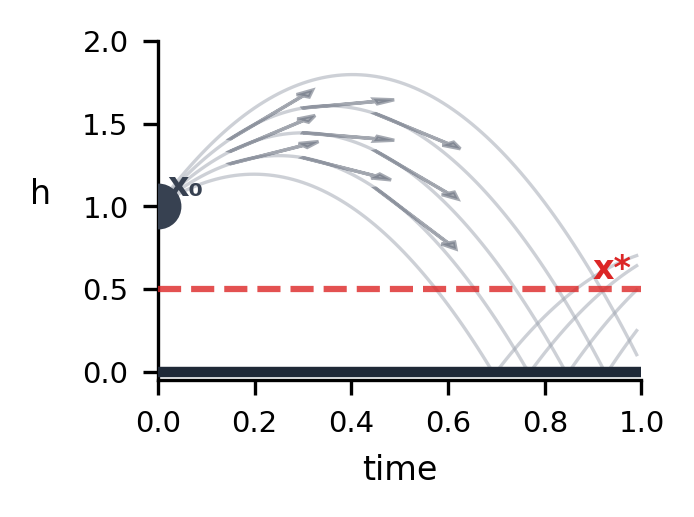

Hybrid differentiable physics example used to stress trace sensitivity under asynchronous semantics.

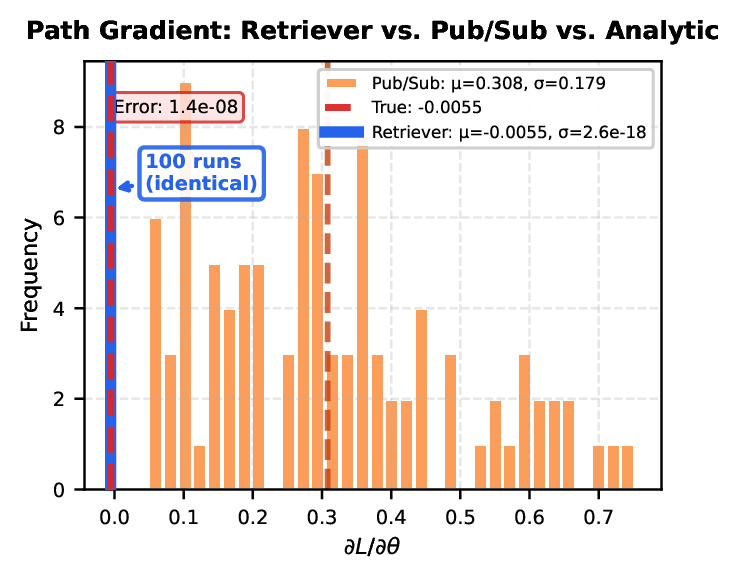

Path-gradient consistency under replay. We report the distribution of path gradients across repeated runs. In the paper’s configuration, event-time semantics yield a single trace and a collapsed gradient distribution, while arrival-time semantics produce multiple traces (and hence inconsistent gradients) due to scheduling jitter and stale reads.

A practical way to interpret this plot is: if you can replay a log and guarantee that each flow consumes the same input IDs at each tick, then both the forward trace and the path gradient are stable. If input consumption depends on arrival order, then the same nominal run can map to multiple traces, which shows up as a spread of gradients.

Distribution of path gradients across repeated runs: event-time semantics collapse to a single trace/gradient, while arrival-time semantics can yield trace divergence and gradient variability.

Robot setup and tasks (capability evaluation)

We deploy the case-study pipeline on a bimanual manipulation platform and evaluate two long-horizon tasks that stress asynchronous closed-loop composition: retrieving a target object from a deformable bag and seasoning with drawers. These tasks require coordinating memory and belief updates under partial observability, slow VLM reasoning for planning and monitoring, medium-rate skill execution, and high-rate control. The drawer task additionally includes explicit information-gathering steps (inspecting drawers) that update belief and trigger conditional planning and monitoring logic.

In the paper, task progress is computed by the execution monitor as a normalized completion score, and we report time-to-best-progress rather than a fixed completion time (since ablations may fail early or plateau). This evaluation is designed to connect directly back to the pipeline semantics: plan chunking enables overlap between planning and execution, and progress feedback enables progress-guided switching that is more robust than timeout-only sequencing under asynchronous latency.

Key takeaways

Across the benchmarks and stress tests, end-to-end latency overhead remains close to native transport baselines even though the programming model exposes more explicit structure. Event-time semantics improve replayability and lead to more stable trace and gradient behavior in the hybrid dynamics setup. In real-robot evaluation, the same multi-rate closed-loop composition supports long-horizon tasks without collapsing everything into ad hoc concurrency glue.

Conclusion

We introduce Retriever, a programming model and runtime for asynchronous, compositional robot agents. By making run clocks and edgewise synchronization policies explicit, Retriever supports modular closed-loop pipelines that operate efficiently and deterministically under real-world timing constraints. Our experiments demonstrate that Retriever enables the composition of planning, belief updates, progress monitoring, skill execution, and high-rate control in long-horizon manipulation, while improving replayability and debuggability.